Tuesday, January 26, 2010

Slides from my ZISC talk on Black Swans in IT Security

Thursday, December 17, 2009

Recent Uploads to Scribd, Dec 17

- Threat Classification, Web Application Security Consortium

- Statistical-based approach to password guessing

- Clobbering the Cloud

- NIST Statistical Test Suite

- 2009 Key Management Report from True Catalyst

- iPhone Privacy presentation from Nicolas Seriot

Sunday, December 13, 2009

A USB Entropy Drive

A UK company called Simtec Electronics has created a USB thumb drive device with dedicated hardware for producing a continuous stream of high entropy bits, suitable for mixing into an existing entropy pool on your device or feeding directly into applications and protocols that require sources of randomness. The product is called Entropy Key and and can be ordered from the website for £36.00, with further discounts for bulk orders

An overview of the process for producing the entropy is given in the diagram below. There are two independent noise generators based on P-N junctions that are sampled at a high rate to produce a stream of bytes. The output of each generator and their XOR are subjected to the universal statistical bit test devised by Ueli Maurer. If the sequences pass this test then von Neumann’s debias trick is applied, and then another round of universal testing, followed by hashing with Skein. This process blocks at any stage if the computed statistics fall outside conservative estimates of the properties of random generators.

These steps are repeated until 20,000 bit have been collected, upon which the statistical tests recommended by FIPS 140-2 are applied. The 20,000 bit pool is then parcelled into blocks of 256 bits, and Simtec estimates that each such bock has been generated from about 5,000 bits of noisy hardware samples.

There is quite a reliance on Maurer’s universal statistical bit test, and perhaps justifiably so since this test is specifically designed to detect deviations from expected statistical properties in a bit generator by computing an estimate of the generator’s entropy using ideas from universal data compression algorithms. The test is quite simple, and there is a reasonable description and parameterization given in NIST SP-800 22, which also contains a description of a large number of other statistical tests. A research paper on a finer analysis of Maurer’s test can be found here.

The output rate is, by its nature, variable but an average rate of more than 30 kilobits per second is expected. The complete client daemon source is provided under an MIT license which means everyone is free to examine the code for themselves. SimTec also notes that the Entropy Key can automatically detect various different physical attacks, such as temperature changes (by using a built-in temperature sensor), and opening of the case. The device is currently undergoing testing with "select customers” but is available for general ordering. There is an IRC channel #ekey on the oftc network if you want to discuss any of this further.

(Thanks to Vincent Sanders for providing some more technical details)

Saturday, December 12, 2009

A Faraday Wallet

Do you remember the blocker tag? This was a proposal from researchers Ariel Jules, Ron Rivest, and Michael Szydlo, all affiliated with RSA Data Security, published (and surely patented) in 2003. It was about this time that the privacy concerns over information that could be collected about you and your habits by engaging in passive exchanges with arbitrary RFID readers was reaching its zenith. Your RFID tagged items could be passively giving away a lot information without your consent or knowledge.

Jules, Rivest, and Szydlo jokingly suggested that you could encase yourself in protective foil or mesh, which would make walking a bit more awkward but perhaps could be useful for wallets. And now we have just such a wallet, shown below.

It is advertised as an Identity Theft Preventing Privacy Wallet, retailing for $79.95. The product advertising states that

Most credit cards now contain tiny chips that provide a unique identifier that can be scanned to retrieve your billing details.

Such RFID technology can, unfortunately, also be corrupted by hackers using portable scanners in crowded airports, restaurants, and stadiums. Our slim and surprisingly lightweight Privacy Wallets are literally woven from over 20,000 super-fine strands of stainless steel into a flexible fabric that feels like silk — but protects your ID like armor plate! Stronger than leather, with no sharp edges or corners — and won’t stain or scratch.

Inside, 6 credit card slots, 2 internal slots, and a billfold let you carry plenty of purchasing power — completely shielded from today’s high-tech pickpockets.

This could go well with your swine flu suit.

Thursday, December 10, 2009

The Crypto Year in Review from Bart Preneel

Preneel then considers hash functions, the topic of his PhD thesis. He gives a benchmark slide showing the complexity of collision attacks against well-known hash functions, assuming a $100K USD funded adversary using special hardware (equivalent to 4 million PCs).

The downward trend of the graphs is suggestive of a meltdown for hash functions, with the worst implications for protocols and signatures yet to play out. The transition path to a better hashing standard via the SHA-3 hash contest of NIST in underway, and a new standard by will be selected by 2012. However Preneel is concerned that the design of SHA-3 will be based on state-of-the-art from 2008 – that is, all of the additional insights and learnings produced in the evaluation of the SHA-3 candidates will not be exploited until SHA-4.

Monday, December 7, 2009

WPA Password Cracking in the Cloud for $34

Following on from the recent results of a project on the feasibility of password cracking using cloud computing, a new cloud service for cracking WPA passwords has been announced by researcher Moxie Marlinspike, best known for his work on discovering subtle flaws in the SSL protocol. The cloud service attempts to crack uploaded passwords against a 135 million word WPA-optimized dictionary on a 400 CPU cluster.

The stated purpose of the service is to assist penetration testers and network auditors who need to test the strength of WPA passwords that use pre-shared keys (PSK or personal mode). In this mode, the PSK master key is used to derive a session key from several parameters in the initial wireless connection handshake, including the MAC addresses of the client and base station. In practice, the PSK is a password and is therefore exposed to a brute force or dictionary attack.

Verifying a guess for the PSK password is relatively costly, roughly the equivalent of processing a megabyte of data, since the session key is derived from 4096 iterated hash operations. This high iteration count, or spin, limits the number of password trials to several hundred per second on a standard desktop. Marlinspike's cloud service searches through a 135 million word dictionary in just 20 minutes, a computation that would otherwise take 5 days or so on a standard desktop.

And all this for just $34, after a client has uploaded a capture of the WPA handshake on the network of interest. If the cloud service does not find a password then a client can be confident that the submitted password is resistant to dictionary attacks – no refund, you still pay for the assurance!

The Church Of Wifi has a project to create public rainbow tables for WPA based on common passwords, but the resulting tables only encode one million passwords because the tables must be recomputed for each network identifier (ESSN), which acts as salt in the password derivation function. Marlinspike opted to create his own WPA-optimized dictionary list which includes word combinations, phrases, numbers, symbols, and “elite speak”.

In Security Muggles I posted about finding ways to connect with management about security. Robert Graham, CEO of penetration testing company Errata Security, has commented that "When I show this to management and say it would cost $34 to crack your WPA password, it's something they can understand," he said. "That helps me a lot."

PC World has somewhat dramatically reported the announcement as New Cloud-based Service Steals Wi-Fi Passwords, suggesting that the cloud is reaching into wireless networks and grabbing passwords. In fact, the service is designed to trade-off a 5-day desktop computation against a 20-minute cloud computation for $34. Nonetheless, a better name would be WPA Auditor rather than WPA Cracker.

Sunday, December 6, 2009

A Threat Model for SSL

I traced a link to my post How fast are Debian-flawed certificates being re-issued? to SSL Shopper, a new site for me, and I looked back through their news items and found a link to an SSL Threat model proposed by Ivan Ristić, the principle author of ModSecurity and a leader in Apache security.

Referring to the origins of his threat model, Ivan states that

SSL is easy to use but also very easy to use incorrectly. The ecosystem, which is built of the specifications, the implementations, the CAs and the PKI, is full of traps, each of which is very easy to fall into. Once I started to spend significant time thinking about SSL I set out to build a model of the ecosystem, for my own education and to ensure that I understand it all. That's how I arrived to the SSL Threat Model.

His threat model is represented as a FreeMind map, available as a graphic as shown below. The threat model considers clients, servers, PKI, protocols, users and attacks, and perhaps the model needs to be updated in light of the new The TLS Renegotiation Attack.

Ivan admits that the model needs some additional clarification, but it is probably more useful as a published draft rather than waiting for him to find the time to perfect the model (the same reasoning lead me to releasing my outline of a password book).

Tidbits: A5/1, WhiteListing and Google

There is a nice write-up in the very respectable IEEE Spectrum on the A5/1 rainbow table generation project run by Karsten Knol for cracking GSM encryption. The write-up for Knol in the IEEE is a signal that his project has mainstream acceptance and attention. The timeliness of Knol’s project was heightened a few weeks ago when MasterCard announced that they would be using GSM as an additional authentication factor in their transactions. This MasterCard feature may end up being very popular since IDC recently predicted that the mobile phone market will exceed one billion handsets by the end of 2010. Hopefully this huge demand will increase the deployment of the the stronger A5/3 algorithm that is being phased in during upgrades to 3G networks.

The evidence continues to mount that whitelisting is an idea whose time has come. Roger Grimes at InfoWorld published a large list of reviews on whitelisting products, and he was pleasantly surprised that “whitelisting solutions are proving to be mature, capable, and manageable enough to provide significant protection while still giving trustworthy users room to breathe”. Given that the amount of malware introduced in 2008 exceeded all known malware from previous years, viable alternatives to the current blacklisting model are needed.

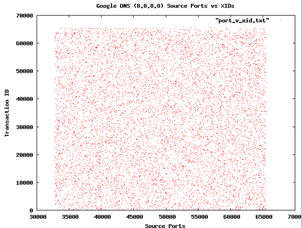

Finally, Google recently announced that it will be offering its own DNS service, nominally to increase performance and security. Sean Michael Kerner wonders whether Google DNS is resistant to the attacks reported by Dan Kaminsky last year, where common DNS implementations relied on poor random numbers. H. D. Moore has forwarded Kerner some graphs he made from sampling Google DNS for randomness. First, a plot on port scanning

In both cases the graphs look quite random, and so both Kerner and Moore conclude that Google seems to be a right track here. But Moore concedes that more testing to be done.

November Blog Round-Up

I normally don’t post monthly blog summaries, but last month there seemed to be quite a bit to write about. It was a good month for visits and page views, so thank you to everyone. Here is the No Tricks dashboard from Google Analytics (click to enlarge).

And a review of the posts

Opinion and Analysis

- The Other Google Desktop

- How fast are Debian-flawed certificates being re-i...

- The TLS Renegotiation Attack for the Impatient

- More on TMTO and Rainbow Tables

- The Internet Repetition Code

- Security FreeMind map sources

- Not so sunny for Whit Diffie

- Quadratic Football Revisited

- FUDgeddaboudit

- MasterCard bets on A5/1

- TLS Renegotiation Attack Whitepaper

- How big is 2^{128}?

Visualisations

- Visualisations of Data Loss

- Navigation map of the Cloud Ecosystem

- Growth of Wal-mart across America

- Death Star Threat Modeling

- mini-Bruce for $89

News

Improving Blog Navigation

Wednesday, December 2, 2009

Google Maps and Crypto Laws Mashup

Simon Hunt, VP at McAfee, has a great Google map mashup application that visually maps crypto laws to countries around the world, including individual US states. The map was last updated in September.

Monday, November 30, 2009

Visualisations of Data Loss

The detailed data collected by DataLossDB.org has been uploaded to two of the popular sites for visualization of data. The first is Swivel, and the graph from here shows the number of breached records as a percentage of the TJX incident where 45 million records were compromised (this is represented as 100% on the graph)

The “spikey” nature of the losses was identified by Voltage Security as the log-normal distribution. The DataLossDB data has also been uploaded to Many Eyes (several times it seems), and searching on data loss gives several graphs, like this one for data loss by type

You can use the loss data set at Many Eyes to produce other graphs of your own choice. Lastly, Voltage has a great graphic showing another representation of the relative size of data losses, which has a fractal feel to it

TLS Renegotiation Attack Whitepaper

I recently gave a simplified review of the TLS renegotiation attack. For additional technical details on the attack I recommend the 17-page whitepaper TLS and SSLv3 Vulnerabilities Explained by Thierry Zoller. He makes good use of protocol flow diagrams and considers the implications of the attack for HTTP, FTP and SMTP. He also describes fixes for the attack and a simple method to test for vulnerable servers uses OpenSSL.

Sunday, November 29, 2009

How big is 2^{128}?

I came across a 2006 email thread from John Callas, CEO of PGP, trying to dispel the perception that a government agency might have the computing power to break 128-bit keys. He recounts a characterization of the required effort, that he attributes to Burt Kaliski of RSA Data Security (now part of EMC)

Imagine a computer that is the size of a grain of sand that can test keys against some encrypted data. Also imagine that it can test a key in the amount of time it takes light to cross it. Then consider a cluster of these computers, so many that if you covered the earth with them, they would cover the whole planet to the height of 1 meter. The cluster of computers would crack a 128-bit key on average in 1,000 years.

So that’s 1,000 years of computation by a cluster that would envelope the earth to a height of one metre.

Callas’ point was that modern cryptosystems are essentially unbreakable against brute force attacks, and speculating over the computational power of three letter agencies against 128-bit keys is verging on paranoia. Breaking passwords – that protect accounts, data or larger cryptographic keys – is a much more credible scenario to consider. Callas claims that two thirds of people use a password related to a pet or loved one, and there is no need for a planet-sized cluster to guess those.

Friday, November 27, 2009

The Other Google Desktop

Two weeks ago the Economist ran an interesting article Calling All Cars, describing how systems such as OnStar (GM) and Sync (Ford) that were conceived for roadside assistance have expanded beyond their original service offerings to include remote tracking and deactivation (car won’t start), slowing down a moving car to a halt (no high speed chases), fault diagnosis and timely servicing. Even so, 60,000 OnStar subscribers a month still use the service to unlock their cars – the auto equivalent of password resets.

I have often wondered why AV vendors have not leveraged their platforms and infrastructure significantly beyond their initial offerings in the same way. The larger vendors that service enterprise customers have a sophisticated update network for clients, that feeds into corporate networks for secondary distribution internally. Desktops are equipped with software for searching against a database of signatures, accepting or initiating dynamic updates, plus monitoring and reporting. Surely this is a basis for useful enterprise applications beyond the necessary but not so business-friendly task of malware scanning?

It is widely reported that the traditional AV signature cycle of detect-produce-distribute is being overwhelmed, and the effectiveness of AV solutions is decreasing. So AV companies should be on the lookout for new and perhaps non-traditional functionality. But even if this was not the case it would be worthwhile to consider additional services bootstrapped off the installed base.

I think one generalization would be the extension of the search capability away from signature matching towards a Google desktop model - away from search-and-destroy to search-and-deliver. Imagine if Norton or Kaspersky were presented to users as document management systems permitting tagging, search of file content, indexing, and database semantics for files – that is, provide a useful service to users beyond informing them that opening this file would not be a good idea.

In the corporate setting, desktop document search and analysis could provide many useful functions. Let’s take data classification for example. I am not sure if we can ever expect people to label documents for data sensitivity. Even if people were resolved to be diligent in the new year, there would still be a large legacy problem. Imagine now that senior management could create a list of sensitive documents and then feed them into an indexer, which distributed data classification “signatures” to desktops. The local software can scan for matching documents (exact or related), create the correct labelling and perhaps even inform the users that such documents should be dealt with carefully, perhaps even pop-up the data classification policy as a reminder.

You could also track the number of copies and location of a given sensitive document, such as a drafts of quarterly financial results, which must be distributed for review but only within a select group. Again management could define which documents need to be tracked and feed them into a signature engine for propagation to desktops. If a document fell outside the defined review group, then a flag could be raised. When a sensitive document is detected as being attached to an email the user can be reminded that the contents should be encrypted, certainly if the recipients are external to the company, and perhaps prevented from even sending the document at all.

The general innovation here is to permit client management to define search and response functions for deployment within the AV infrastructure, extending beyond the malware updates from the vendor. I think there are many possible applications for managing documents (and other information) on the basis of the present AV infrastructure for distribution, matching and scanning, especially if local management could create their own signatures.

I have to admit that I am not overly familiar with DLP solution capabilities, and perhaps some of my wish list is already here today. I would be glad to hear about it.

Thursday, November 26, 2009

Death Star Threat Modeling

I was searching through the entries in my Google Notebook and came across a link to Death Star Threat Modeling, a series of three videos from 2008 on risk management by Kevin Williams. Sometimes we struggle to explain the notions of threat, vulnerability and consequence, but sit back and let Kevin take you through a tour of these concepts in terms of Star Wars and the defence of the Death Star. There are 3 videos and the link to the first is here, and the other two are easily found in the Related Videos sidebar.