This year is the 30th anniversary of the journal publication for the RSA cryptosystem:

R. Rivest, A. Shamir, L. Adleman. A Method for Obtaining Digital Signatures and Public-Key Cryptosystems. Communications of the ACM, Vol. 21 (2), pp.120–126. 1978.

This is one of the most cited papers in all of computer science. The authors are pictured above around the time their RSA paper was published (Shamir, Rivest, Adleman - left to right). I learned about the mathematics of RSA in 1985 as part of a cryptography course based on Dorothy Denning's excellent introductory text Cryptography and Data Security. Over the years quite a few security professionals have confided in me that they do not really understand the basic mathematics of how RSA works. In this post I hope that I can tell the RSA story one more time, in a way that makes it easier to understand.

Background

Let E be a generic encryption operation, and let D be its corresponding decryption operation. For any message M, a basic property of any cryptosystem is that

M = D(E(M))

or in words, that decrypting an encrypted message yields the original message. We can then think of D(E( )) as a self-mapping, taking messages M to themselves under the action of some key. To explain RSA we are going to look at several well-known self-mapping functions from number theory, and then show how we can decompose these self-maps into two parts, which can be thought of as encryption and decryption.

All references below to numbers will mean non-negative integers: 0, 1, 2, 3 and so on. Number theory is mainly concerned with these numbers and their properties. Also, we will use the terms number and message interchangeably, since any message has a binary representation that can be interpreted as a number (see the standard PKCS #1 on a specific method for doing this).

Modular Exponentiation

Our source of self-mappings will be derived from modular exponentiation, the fundamental operation in many public key systems including RSA, DSA, Diffie-Hellman, and El Gamal systems.

We start with the simpler notion of exponentiation. Exponentiation is the operation of raising a base M to an exponent e, written as M^e, which simply means to multiply M by itself e times. When e = 2 exponentiation is called squaring, and called cubing when e = 3. For larger values of e we also describe M^e as "raising M to the e-th power".

There are two rules which can be used to simplify exponentiations, both of which are used in RSA: the product rule

M^(e*d) = (M^e)^d

and the sum rule

M^(e + d) = (M^e) * (M^d).

In modular exponentiation we are given an additional parameter m and we need to compute M^e mod m. For any value x , x mod m means to return the remainder of dividing x by m. So 17 mod 5 = 2 since 17 = 3*5 + 2, or in words, 5 goes into 17 three times with 2 left over (the remainder). Here m is called the modulus. So M^e mod m means to first compute M^e and then return the remainder upon dividing by m.

Flawed RSA v1

After that introduction, we need one simple result to take us forward, called Fermat's Little Theorem: let p be a prime and let M be any value in the range 0 < M < p, then

M^(p - 1) = 1 mod p

Since M^p = M^(p-1) * M by the exponentiation sum rule, and M^(p-1) = 1 mod p it then follows that

M^p = M mod p.

This is good news since we have now found a self-mapping function. Can we somehow decompose this self-map into two parts, one that we can call encryption and the other decryption?

Well imagine that Alice can find two values e and d such that e*d = p, and let Alice publish the pair (e, p) as her public exponent and her public modulus, respectively. If Bob wants to send her a message M she instructs him to encrypt it using the following modular exponentiation

C = M^e mod p

and Bob then sends C to Alice. When Alice receives C she recovers M by the following modular exponentiation

M = C^d mod p.

But why does this operation return M to Alice? Well its because

C^d = (M^e)^d = M^(e*d) = M^p = M.

Here we have used the exponentiation product rule: the exponentiation applied by Bob is itself exponentiated by Alice to return the original message.

But we have a snag. We said that Alice found e and d such that e*d = p, but since p is prime then the only possible solutions for e and d are 1 and p. But if e = 1 or e = p then the encryption operation M^e mod p applied by Bob has no effect on M since

M^1 = M^p = M mod p.

Encryption would be doing nothing.

But here is the key observation.

The property that permits Alice to recover M is not e*d = p but rather that

e*d = (p - 1) + 1 = 1*(p-1) + 1

and Alice can still recover M if e*d is equal to 2*(p-1) + 1, or 3*(p-1) + 1, or k*(p-1) + 1 for any k > 0. Why? Well using the product rule we have that

M^(k*(p-1)) = M^((p-1)*k) = (M^(p-1))^k = 1^k = 1 mod p,

M^(k*(p-1) + 1) = M^(k*(p-1)) * M = M.

So we are back in business if we can find e and d such that e*d = k*(p-1) + 1, for some k > 0. But using modular arithmetic, this is the same as saying that

e*d = 1 mod (p - 1).

As it turns out there is something called the Euclidean algorithm which is a method for efficiently solving for x in modular equations of the form a*x = b mod m. So we can just pick a random value for e in the range 1 < e < p and then use the Euclidean algorithm to find the corresponding value of d for which e*d = 1 mod (p - 1).

Let's see an example of this. Let p = 61 and then select e as e = 17. Then we have that d = 53 since 17 * 53 = 1 mod 60, or more specifically, 17 * 53 = 15 * 60 + 1. So encryption would then be defined as C = M^17 mod 61 and decryption defined as M = C^53 mod 61. Here our messages would need to be less than 6 bits in length.

But now we have another snag - quite a serious one this time. When Alice publishes her public key (e, p), what distinguishes her from other people is her knowledge of her decryption key d. But since e*d = 1 mod (p - 1) then another person Carol can easily determine d by simply using the Euclidean algorithm to solve e*d = 1 mod (p - 1) since e and p - 1 can be directly derived from the public (e, p).

To fix this snag we need to change our tactics and move from a public modulus that is a prime p to a value n = p*q that is the product of two primes p and q.

Correct RSA v2

The importance of Fermat's Little Theorem in the last section is that it gave us a self-mapping modulo a prime p. There is a generalisation of this result for composite numbers known as Euler's Theorem: For any n let a be any value in the range 0 < a < n, that shares no factors with n then

a^phi(n) = 1 mod n

where phi(n) is the number of values in the range 1 to n that share no factors with n. Note here that for a prime p that phi(p) = p - 1 so that Euler's criterion includes the result of Fermat's Little Theorem.

If we let n = p*q for two primes p and q then it is easy to show that phi(n) = (p-1)*(q-1). Now we can repeat the construction in the previous section by replacing computations with p - 1 (derived from Fermat's Little Theorem) with computations based on phi(n). To this end we select a random e in the range 1 < e < phi(n) and use the Euclidean algorithm to solve for d such that

e*d = 1 mod phi(n) or e*d = 1 mod (p-1)(q-1).

Alice's public key becomes (e, n) and Bob can encrypt a message M for her as M^e mod p. Alice decrypts the message as M = C^d mod p which works because

C^d = (M^e)^d = M^(e*d) = M^(k*phi(n)+1 ) = 1^k * M = M mod n.

Let's illstrate these principles with the RSA example from Wikipedia. Let Alice choose as her two primes p = 61 and q = 53. Then n = 61 * 53 = 3233 and phi(n) = (61 - 1)*(53 - 1) = 3120. If Alice selects her public exponent to be e = 17 then d = 2753 since

17 * 2753 = 46801 = 15 * 3120 + 1.

So the public key of Alice is then (17, 3233) and anyone can encrypt a message for Alice by computing C = M^17 mod 3233. Alice recovers the message by computing M = C^2753 mod 3233.

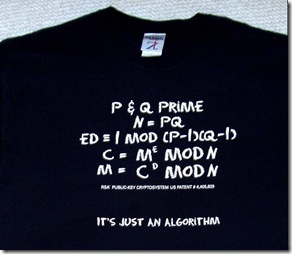

By now you should be able to follow this T-shirt description of RSA.

Let's think about Carol again. Can she easily determine Alice's private key d from her public parameters (e, n)? Well if she could determine phi(n) from n then she could use the Euclidean algorithm to solve for d in the equation e*d = 1 mod phi(n). But as was shown in the original RSA paper, if Carol could derive phi(n) from n then this would be equivalent to Carol having the power to factor n, which is known to be a computationally hard problem.

We have a left out many technicalities in this description of RSA, and to implement RSA in practice you need to solve many other issues, such as how to find large primes. For RSA to be secure it must be the case that n is sufficiently large to defeat the best known factoring algorithms. Using current estimates p and q should be at least 500 bits in length, and often over 1000 bits for additional security. The construction for RSA as outlined above is totally general - you can pick the primes as small or large as you like - the math still all goes through. However, larger keys takes longer times to process.