Quantum computing ringing the imminent death knell of encryption is a familiar refrain in the popular press. The end of a dynasty is close at hand. A recent article in IT News is typical when it states that advances in quantum computing are likely to "render[ing] digital encryption techniques all but obsolete". Not quite.

Quantum computing ringing the imminent death knell of encryption is a familiar refrain in the popular press. The end of a dynasty is close at hand. A recent article in IT News is typical when it states that advances in quantum computing are likely to "render[ing] digital encryption techniques all but obsolete". Not quite.

Quantum computers are very different from the ones we have on our desktops now, usually referred to as classical computing devices. A potential misconception is to think that quantum computers will teleport us to a future where the benefits of Moore's Law compounded over several centuries can be reaped. Intel's leading chip line, the x86, has steadily progressed from 286, 386, 486, Pentium and so on, but quantum computers will not be "1000-86" devices - unimaginably faster versions of what we have today.

Quantum computers are built from different computational primitives than our present devices. It is difficult to formally explain these differences but the main idea is that quantum computers can operate in many states simultaneously whereas classical computers are built from (bipolar) components that are interpreted to operate in one of two states - 0 or 1, on or off.

The massive type of parallelism furnished by quantum logic cannot as yet be used to solve general computational problems. A specific quantum algorithm must be tailored for each problem class of interest - such algorithms are more a bespoke than off-the-shelf technology. The quantum algorithm that has received the most attention with respect to security was devised by Peter Shor, and proves that factoring is efficient on a quantum computer. When you read that current encryption methods will be rendered useless by quantum computation, the authors are usually referring to Shor's algorithm.

Shor's Algorithm

How does Shor's algorithm work? Well it uses a quantum algorithm to solve a particular equation whose solution is likely to factor a number. For the last 30 years or so, most modern factoring algorithms have been converging on a single idea, which is the following. Say that N = P*Q is the number that you wish to factor. Assume that you can find two numbers X and Y such that

How does Shor's algorithm work? Well it uses a quantum algorithm to solve a particular equation whose solution is likely to factor a number. For the last 30 years or so, most modern factoring algorithms have been converging on a single idea, which is the following. Say that N = P*Q is the number that you wish to factor. Assume that you can find two numbers X and Y such that

X^2 = Y^2 (mod N)

which means that the squares of X and Y leave the same remainder when divided by N. It then follows that

X^2 - Y^2 = 0 (mod N)

(X - Y)(X + Y) = 0 (mod N)

so that (X - Y)(X + Y) divides N. It can be shown that there is at least a 50% chance that either (X - Y) or (X + Y) will be divisible by P or Q, and therefore share a nontrivial factor with N. If this is the case, then N can be factored by computing gcd(X - Y, N) and gcd(X + Y, N).

Let's call X and Y a magic pair, and if we can generate enough magic pairs for a given N then we can factor it (think of flipping a coin and waiting for it to turn up heads). So given that factoring is generally believed to be difficult then generating magic pairs must be difficult as well. Most modern factoring algorithms are in fact clever ways to generate magic pairs. While these methods are much faster than brute force approaches, they are far from being efficient.

The breakthrough from Shor was to devise an efficient quantum method for generating magic pairs, which therefore also makes factoring efficient. He did this by reducing the problem of finding magic pairs to what is called computing orders.

Let Z be a number such that 1 < Z < N, and gcd(N, X) = 1 (that is, a number less than N and relatively prime to N). When these conditions hold, there is a smallest number K < N such that

Z^K = 1 (mod N)

This value of K is called the order of Z (modulo N). If K is even, say equal to 2*D, then we have that

Z^(2*D) = (Z^D)^2 = 1 (mod N).

But we can take the square root of both sides of the equation

(Z^D)^2 = 1^2 (mod N)

to yield a magic pair by setting X = Z^D and Y = 1. So finding a number with even order is the same as finding a magic pair.

Shor invented a brilliant quantum algorithm for efficiently computing orders (the details of which are to complicated to reproduce or even summarise here). Then to factor a number N, just generate a random number Z and quantum compute its order. If the order is even, then create a magic pair and try to factor N; if N does not factor, or the order is not even, then choose another Z and repeat. You wont have to do too many iterations before N will be factored with high probability.

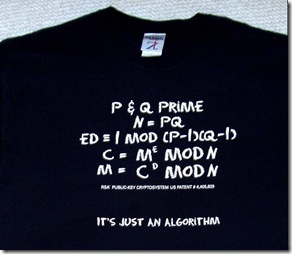

So it is not so much that factoring can be solved easily on a quantum computer but rather that computing orders is easily solved on a quantum computer and factoring can be reduced to computing orders. Any cryptosystem whose security can be reduced to computing orders will also be insecure. Unfortunately this is the case for most well-known public key cryptosystems, including RSA, discrete logarithm based system like Diffie-Hellman and DSA, as well as elliptic curve systems. It is easy to see how Shor's Algorithm quickly becomes a the-sky-is-soon-to-be-falling story line in the press.

However, there are public key systems that are not impacted by Shor's algorithm. One such scheme is the McEliece cryptosystem whose security is derived from intractable problems in coding theory, which has nothing to do with computing orders. The McEliece cryptosystem is not commonly deployed but perhaps its day will come.

Quantum Speculation

We remarked at the beginning of this post that quantum computing is not a technology for cheating Moore's Law - quantum computers are not general devices that will bring with them exponential improvements over what we have today. We need to craft individual quantum algorithms for each class of problem that we want to solve rapidly on a quantum platform. Computing orders is one such general problem whose quantum solution has dire implications for most of today's common public key systems.

The quantum future that brings the end to the RSA dynasty is quite a few decades away, if not more. Your great grandchildren's RSA keys may be under threat, if we are still using RSA then. You may also have some reason for concern if you are encrypting or signing data with public keys that you are assuming will be secure for the next 50 years or so.

Today from a risk perspective quantum computers are a low-probability high-impact event - a coming catastrophe for many extant public key systems. In 100 years quantum computers are likely to be a high-probability high-impact event - that is, a commonplace tool being used for better or worse.

Our RSA keys are safe for some time yet, until quantum computation matures, and it may even turn out that large scale implementations with many quantum logic gates are difficult to engineer. Certain public key systems will survive even sophisticated quantum computer deployments in any case, unless as yet undiscovered specific quantum algorithms can be tailored to these systems as well. One can speculate endlessly.

When the keys bit are extracted from memory some will already have false values due to ground state decay. Pictured left is a decayed Mona Lisa image which contains sufficient information to recognize the original image. The Princeton researchers have devised algorithms for detecting and recovering key bits corrupted by ground state decay, which, after stripping away all the fanfare of the attack, is the most significant contribution of the work. How do we recover the true key from a corrupted copy?

When the keys bit are extracted from memory some will already have false values due to ground state decay. Pictured left is a decayed Mona Lisa image which contains sufficient information to recognize the original image. The Princeton researchers have devised algorithms for detecting and recovering key bits corrupted by ground state decay, which, after stripping away all the fanfare of the attack, is the most significant contribution of the work. How do we recover the true key from a corrupted copy? For efficiency reasons, the extended key is stored in DRAM rather than the user-supplied key. So for DES, and its triple-DES variants, each key bit will be stored at least 14 times in DRAM. Thus there will be (at least) 14 copies of each key bit to work with, which we can think of as 13 bits of redundancy for each key bit. PGP CTO, John Callas,

For efficiency reasons, the extended key is stored in DRAM rather than the user-supplied key. So for DES, and its triple-DES variants, each key bit will be stored at least 14 times in DRAM. Thus there will be (at least) 14 copies of each key bit to work with, which we can think of as 13 bits of redundancy for each key bit. PGP CTO, John Callas,